The Six Levels of AI: From Machine Learning to Agentic Systems

People throw around "AI" as if it's one thing. It isn't. When your finance team runs fraud detection models, that's different from using Copilot in Teams, which is different again from an agent autonomously handling IT tickets.

Each of these is a different level of AI capability — different in what it can do, how it works, and how much human involvement it needs. The terminology gets blurry quickly (is Copilot an agent?), so having a clear mental model of the stack is worth the time.

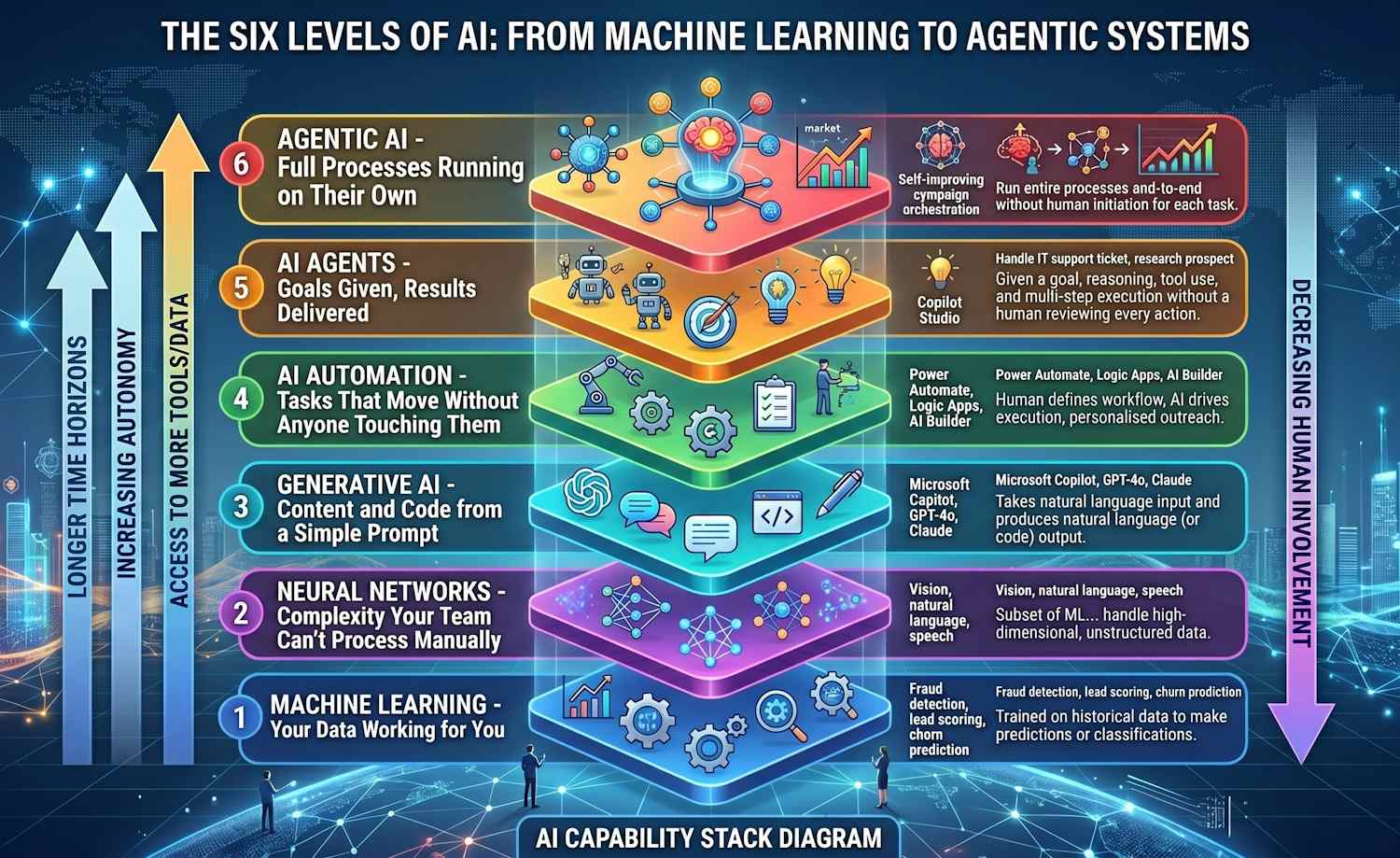

Below is how I think about the six levels, from the narrowest and most controlled at the bottom to the broadest and most autonomous at the top.

The Stack

The diagram below shows the six levels as I think about them, from the narrowest and most controlled at the bottom to the broadest and most autonomous at the top:

The key thing to notice: as you move up the stack, the AI system takes on more autonomy, has access to more tools and data, and operates over longer time horizons. The degree of human involvement decreases at every step.

Machine Learning — Your Data Working for You

Machine learning sits at the base. These are systems trained on historical data to make predictions or classifications: fraud detection, lead scoring, churn prediction, anomaly detection, buying signal analysis. The model learns patterns from examples and applies them to new inputs. It doesn't generate content or hold a conversation — it just outputs a number, a label, or a probability.

In an enterprise context you'll find ML models embedded in security tooling (anomaly detection in Defender, risk scoring in Entra ID), in business analytics (Power BI ML features, Azure Machine Learning pipelines), and in third-party SaaS products doing things like spam filtering and invoice processing.

What makes ML actually work is labelled training data — lots of it. The model doesn't know anything before training; it builds its understanding entirely from the examples you feed it. That's also why ML projects fail: not because the algorithm is wrong, but because the training data is incomplete, biased, or just not representative of the real situation the model will be asked to handle in production.

Neural Networks — Complexity Your Team Can't Process Manually

Neural networks are technically a subset of ML — the distinction here is really about model complexity and what you're using them for. Simpler ML (linear regression, decision trees, gradient boosting) works well with structured tabular data and clear features. Neural networks handle high-dimensional, unstructured data: vision, natural language, speech, and large-scale pattern detection that would be impossible to hand-engineer features for.

The models behind generative AI — transformers like GPT — are neural networks, which is why Levels 2 and 3 often get blurred. The distinction worth keeping is that Level 2 neural networks are still narrow: an image classifier trained on manufacturing defects doesn't write text; a speech model transcribes audio and stops there. The "generative" leap at Level 3 happens when you train a model on vast mixed data and ask it to produce new content, not just categorise existing content.

Generative AI — Content and Code from a Simple Prompt

This is the layer most people are actually thinking about when they say "AI." Generative AI: large language models like GPT-4o, Claude, Gemini, and the models behind Microsoft Copilot — takes a natural language input and produces natural language (or code) output. CRM updates, email drafts, proposal writing, code generation, onboarding documentation, instant follow-ups: all of these are common enterprise uses.

One important nuance: out-of-the-box, a generative model only knows what it was trained on. It has no access to your company's data, your latest documents, or what happened yesterday. Retrieval-Augmented Generation (RAG) is the standard fix — you retrieve relevant content from your own data store and include it in the prompt as context. That's how Microsoft Copilot for Microsoft 365 works: it searches your emails, meetings, and documents and passes the relevant chunks to the model before it responds.

AI Automation — Tasks That Move Without Anyone Touching Them

AI automation is where the AI starts taking actions rather than just producing outputs. A human still defines the workflow, but AI drives execution: personalised outreach that sends itself, lead routing that triggers CRM updates, campaign management that adjusts spend, hiring pipelines that filter and schedule candidates.

In Microsoft terms, this is the layer where Power Automate, Logic Apps, and AI Builder live. The automation tools are mature; the AI components are newer and evolving quickly.

The meaningful difference from plain automation (Level 1–2 patterns) is that the AI component can handle variation. Traditional automation breaks when the input doesn't match the expected format. AI automation can read an unstructured email, extract the intent, and trigger the right downstream action — even if every incoming message is worded differently. That flexibility is what makes it genuinely useful for customer-facing and operational workflows.

AI Agents — Goals Given, Results Delivered

An AI agent is given a goal — "handle this IT support ticket", "research this prospect and prepare a briefing", "manage the product launch checklist" — and it works out how to achieve it. That means reasoning, tool use, and multi-step execution without a human reviewing every action.

In 2026 this is no longer a research topic. Microsoft Copilot Studio agents, GitHub Copilot Workspace, Azure AI Agent Service, and third-party platforms like AutoGen are all in production use. If your organisation is running Copilot Studio, you're running agents.

What makes something an agent rather than just automation is the planning loop. An agent doesn't just execute a fixed sequence of steps — it decides what to do next based on what it already knows, what tools are available, and what the goal requires. It can call an API, read the response, decide that's not enough, call a different API, and iterate until it has a satisfactory answer. That planning capability is what separates Level 5 from Level 4.

Agentic AI — Full Processes Running on Their Own

Agentic AI is the top of the stack: systems that run entire processes end-to-end without human initiation for each task. Self-improving systems that update their own behaviour based on outcomes. Campaign orchestration systems that plan, execute, measure, and adjust without anyone pressing a button. Multi-agent pipelines where specialised agents hand off work to each other.

This isn't fully mainstream in most enterprise environments yet — but it's close. Microsoft's Autonomous Agents in Dynamics 365 are the clearest near-term example. The direction of travel is clear.

The distinction from Level 5 agents is persistence and scope. An agent handles a task; an agentic system handles a domain. It runs continuously, coordinates multiple specialised agents beneath it, learns from outcomes, and adjusts its own behaviour over time. You wouldn't manually trigger it for each task any more than you'd manually trigger your email server for each message. It just runs.

The Big Picture

Each level adds a different kind of capability — and they're often all running simultaneously in the same organisation, whether people realise it or not.

| Level | Autonomy | What it does | Common examples |

|---|---|---|---|

| Machine Learning | None (output only) | Learns patterns from labelled data to classify, score, or predict | Fraud detection, churn scoring, anomaly detection, spam filtering |

| Neural Networks | None (output only) | Handles complex unstructured data: images, speech, large-scale patterns | Computer Vision, Azure AI Speech, image classification, base models for fine-tuning |

| Generative AI | Low (response only) | Takes a natural language prompt and produces content or code | Microsoft Copilot, Azure OpenAI, GitHub Copilot, ChatGPT |

| AI Automation | Medium (actions in defined scope) | AI drives execution of a human-defined workflow, triggering real-world actions | Power Automate + AI Builder, Logic Apps, AI-triggered CRM and email flows |

| AI Agents | High (multi-step goal pursuit) | Given a goal, reasons and uses tools to achieve it across multiple steps | Copilot Studio agents, GitHub Copilot Workspace, Azure AI Agent Service, AutoGen |

| Agentic AI | Full (autonomous processes) | Runs entire end-to-end processes without human initiation for each task | Dynamics 365 Autonomous Agents, self-improving campaign systems, multi-agent pipelines |

The useful thing about this framing is that it clarifies what "AI" actually means in any given context. When someone says they're "doing AI," the six levels above describe very different things — from a scikit-learn model scoring leads in a spreadsheet to a fully autonomous system running a sales campaign end-to-end.

The progression is about autonomy. At the bottom, AI is a tool that produces an output and stops. By the time you reach agents and agentic systems, the AI is making decisions and taking actions over extended time horizons with minimal human involvement. Understanding where on that spectrum a given system sits tells you a lot about what it can and can't do — and how much you can rely on it to run without oversight.